Prof. Dr. Lena Kästner

Research Projects

For the Greater Good?

Deepfakes in Criminal Investigations (FoGG)

Nowadays, even non-experts can generate synthetic media relatively quickly and easily using tools based on generative artificial intelligence (genAI). Among other things, these synthetic media can be deepfakes, which sometimes look very realistic and can be used for systematic deception. While deepfakes can be employed for criminal acts, they also offer unimagined potential for law enforcement as prosecutors might employ voice, speech and video clones in undercover investigations. However, the use of deepfakes raises various technical, legal and ethical questions. What is technically possible? What is legally permitted? And what are the ethical and social consequences?

The aim of the FoGG project is to shed light on these questions from an interdisciplinary perspective. We develop concrete recommendations for society and investigators when dealing with deepfakes and reflect them on concrete examples from both criminal practice and prosecution. We develop specific recommendations for investigators and society on how to deal with deepfakes in criminal prosecution and beyond; by developing an interactive demonstration tool, we bring these recommendations into practice. Ultimately, our research aims to answer the question under what circumstances precisely, and to what extent, is the use of deepfakes socially, morally and legally acceptable?

FoGG is a Konsortialprojekt with funded by the Bavarian Research Institute for Digital Transformation (bidt). My co-PIs are Prof. Dr. Niklas Kühl and Prof. Dr. Christian Rückert.

Image created with DALL-E.

Machine Learning in Neuroscience

Machine learning (ML) and neuroscience have a richly intertwining history, with both the foundations of ML and the latest innovations taking inspiration from biological brains. However, this influence is not a one-way street, as the methodology of ML has become ubiquitous in neuroscience. The applications of ML to neuroscience are numerous, from the analysis of fMRI data to the control of artificial sensory systems. As well as being an invaluable tool for neuroscientists, ML, in the guise of artificial neural networks (ANNs), also plays a role as a framework for modeling biological neural systems. For instance, neuroscientists use ANNs as models of perceptual and cognitive functions allowing them to study cognitive systems in ways previously unavailable.

But as powerful as it may be, applying ML in neuroscience also faces a number of challenges and limitations. Rather than offering a panacea, we think, ML is best viewed as offering tools and methods complementary to traditional neuroscience. At the same time, discovery strategies familiar from traditional neuroscience and life science research may be employed to supplement computational approaches to rendering ML systems explainable.

This is a joint project with with Barnaby Crook.

Modelling Psychopathology:

Networks, Predictions, Multiplexes

Understanding mental disorders requires looking at a variety of different factors contributing to the development and persistence of, as well as the recovery from, mental disorders. To this end, psychiatrists study, e.g., behavioral, psychological, neurophysiological, genetic, pharmacological and environmental influences on psychopathology. Against this background it is little surprising that psychiatry as a scientific discipline is characterized by a plurality of classificatory and diagnostic schemes, along with a plurality of strategies for explanation and treatment.

Prominent approaches brought forward in an attempt to better understand psychopathology include artificial neural networks, mechanisms, multiplexes or multi-layered network models, predictive processing, RDoC (sometimes combined with functional brain networks), symptom networks models, etc. But what exactly are the commitments, strengths and limitations of these different approaches? To what extent is plurality an ineliminable feature of psychiatry and what explanatory approaches may promote epistemically valuable integration? Which of the approaches listed above should we favor, if any single one? And where should computational psychiatry be headed to help us diagnose, explain, and treat mental disorders? These are some of the questions I aim to shed light on in my most recent project. To kick this off, there will be a workshop on “Minds, Models and Mechanisms: Current Trends in Philosophy of Psychiatry”

Explainable Intelligent Systems (EIS)

Artificially intelligent systems are increasingly used to augment or take over tasks previously performed by humans. These include high-stakes tasks such as suggesting which patient to grant a life-saving medical treatment, or navigating autonomous cars through dense traffic. Against this background, it is imperative that intelligent systems meet certain desiderata placed on them by society like (i) perceivable trustworthiness, (ii) the ability to make competent and responsible decisions, (iii) the possibility of adequate accountability attribution, (iv) conformity with legal rights, and (v) the preservation of fundamental moral rights. It is often claimed that meeting these desiderata presupposes that human stakeholders are able to understand intelligent systems’ behaviors and that explainability is crucial to achieve this.

EIS brings together researchers from informatics, law, philosophy, and psychology in a highly interdisciplinary research agenda. We explore what society can appropriately desire from intelligent systems, how precisely understandability contributes to meeting societal desiderata, how explainability enables this understandability, and what role different contexts may play in the process. Our aim is to develop a framework that can guide future research and policy-making regarding intelligent systems.

EIS is funded by a Full Grant from the Volkswagen Stiftung as part of the Artificial Intelligence and the Society of the Future track with myself as head PI.

Scientific Discovery & Experimentation

Many scientific explanations describe mechanisms responsible for the phenomenon to be explained. For instance, explanations of memory and learning frequently involve reference to hippocampal long-term potentiation as the implementing neural mechanism in the brain. But what do these mechanisms look like and how do scientists discover them?

Not surprisingly, mechanism discovery comes in many different forms: it uses a range of experiments, asks various kinds of research questions, is constrained by different norms depending on the context and yields differently structured mechanistic explanations. I am interested in the role that each of these factors play and how they work together. This includes questions about how different kinds of experiments contribute to the discovery process at different stages, to what extent descriptions of mechanisms vary depending on the research question at issue, how systematic connections between different explanations can be identified to integrate scientific findings, and how available theory and methodology epistemically constrain mechanism discovery while striving for accuracy and completeness adds ontological constraints.

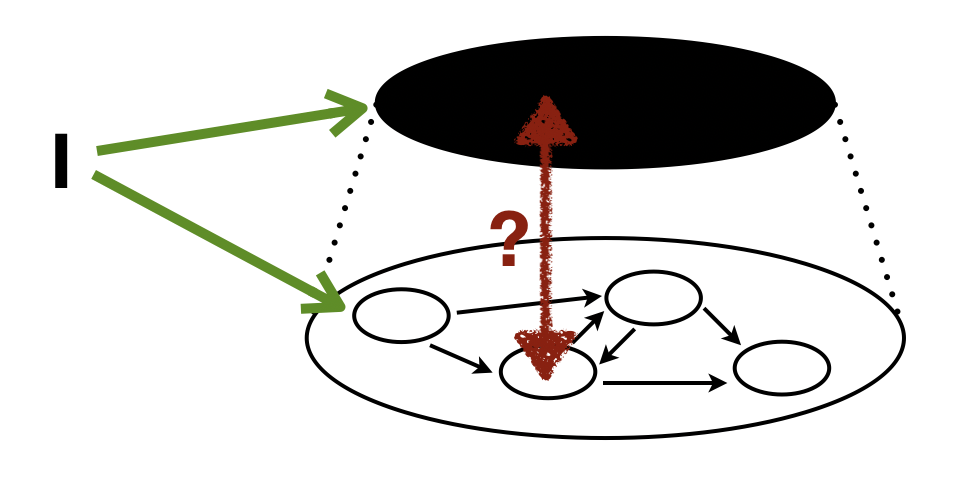

Mechanisms, Interventions, and Causation

Mechanistic explanations are ubiquitous in contemporary philosophy of science; the consensus view seems to be that scientific explanations describe mechanisms responsible for the phenomena to be explained. Two kinds of explanatory relevance relations figure in mechanistic explanations: causal (etiological) and constitutive ones. Following prominent accounts, it seems natural to analyze both these relations in terms of systematic interventions into some factor X with respect to another factor Y. However, such interventions are tailored to uncover causal relations only. Construing the constitutive relationship between parts and wholes in mechanisms in terms of interventions thus raises metaphysical, conceptual, and epistemological questions. I review and discuss the barriers that intervention‐based inquiry into mechanisms encounters in a recent paper as well as my book. I also consider solutions. Most importantly, I argue, to understand scientific explanations we must look into experimental practices beyond interventions.

“Sights & Signs”:

Cortical Sign Language Processing

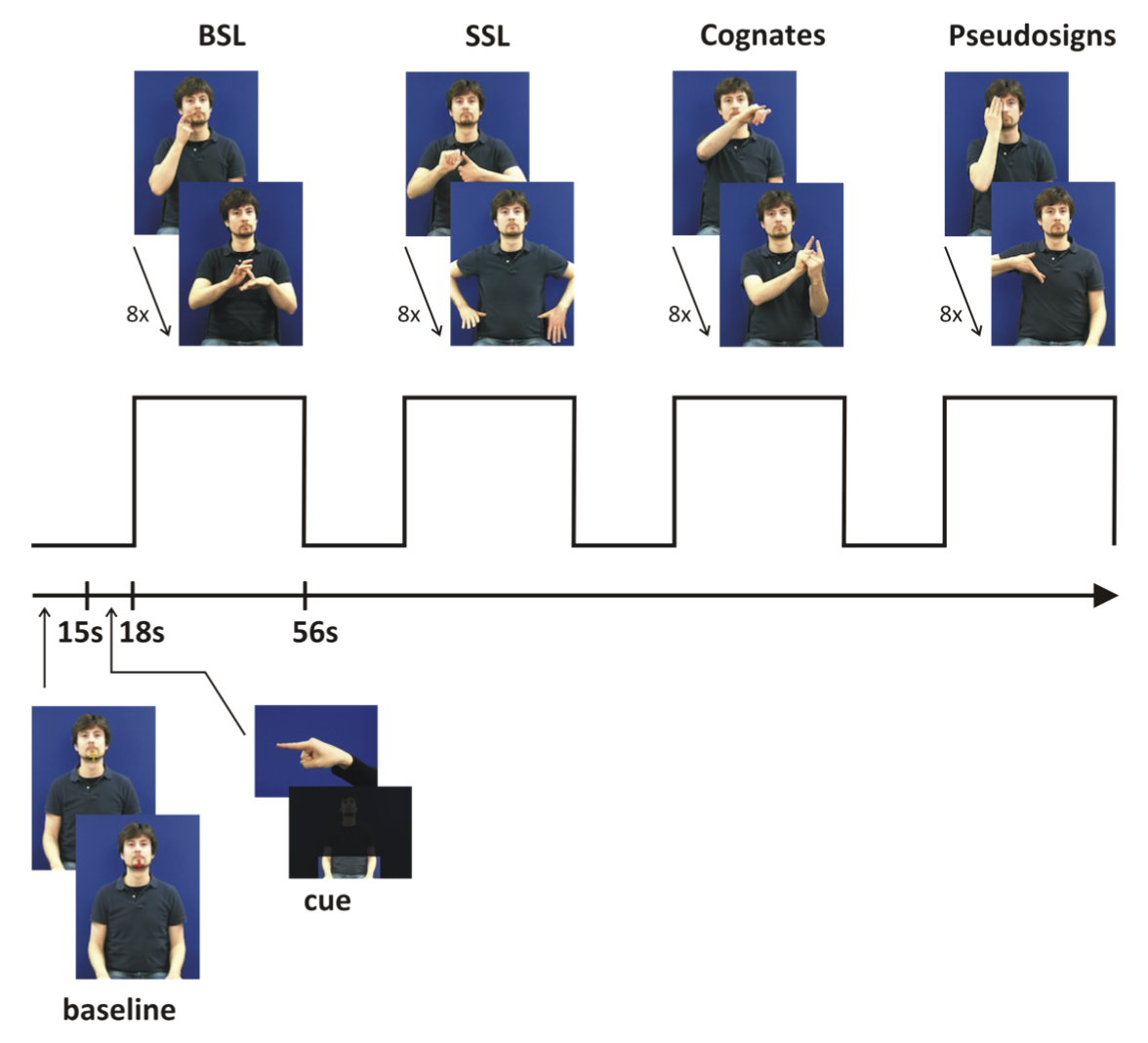

Most our knowledge about the neural basis of language processing derives from spoken language (SpL) research. While SpL is conveyed in the auditory domain, information in sign language (SL) is processed visuo-spatially via movements of face, hands and arms. In a cross-linguistic fMRI study, “Sights & Signs” investigates how visuo-spatial material is processed in the brain depending on whether or not it looks like language.

We examine different levels of linguistic processing (semantic, phonological, visuo-spatial non-linguistic) within SLs by systematic variation of task, stimulus material, and respondent. By comparing deaf native signers and hearing non-signers’ cortical activations while monitoring handshapes and locations across sign-based material, we study whether the ventral “what” and dorsal “where” or “how” streams established for visual processing – and recently suggested for semantic and phonological speech processing, respectively – apply to the different levels of SL processing.

What is “Cognition”?

Most contemporary cognitive scientists want to understand how the mind works. And what the mind does is commonly called “cognition”. But what is cognition?

The dominant conception seems to be that cognition is a mediation process eliciting cognitive phenomena in response to changing environmental conditions; where the behaviorist gloss is no accident. However, this does not give us a full-fledged theory of the cognitive; it does not tell us what is distinctive about cognition, nor how cognition works or where to find it.

Examining different accounts of cognition from early psychology to contemporary Bayesian views, I find that while some views give a clear answer to what cognition should be taken to be or how it works, others focus on the question of where in the world to find cognitive processes. Problematically, though, we cannot answer one question without already having at least a preliminary answer to the other … (For more check out this paper.)